Artificial intelligence (AI) is already visible everywhere, but it has also crept invisibly into our everyday lives. Whether we like it or not, we have to deal with its advantages and dangers. It stands to reason that technical writers may one day be replaced by AI or a combination of several specialized AIs. I can't say whether this is something to be feared, but I have made a practical attempt to "befriend" ChatGPT. And as with any new friendship or partnership, curiosity is high and there are enlightening and sobering moments.

As an exception, I will allow you to read my diary. I ask that you treat it confidentially, as it is very personal and private.

Dear diary,

In recent weeks, I have heard a very good lecture on artificial intelligence and read several articles. I have read interviews with ChatGPT in newspapers, as well as articles written by ChatGPT. My daughter uses ChatGPT under the guidance and instruction of her professors at university.

It's time for me to get to grips with this, in a very practical way. I'm going to try to get in touch with ChatGPT. "It has to be possible. The others are doing it too." (Loriot)

Dear diary,

Today, I signed up for ChatGPT via a browser on my computer (https://chat.openai.com) (Sign-in). I also needed my cell phone. I used my Microsoft account to sign in. However, there are alternative options, such as a Google account. I also had to enter my date of birth. When signing in, a verification number is sent to your cell phone, which I had to enter on my computer. The whole process is a bit cumbersome, and I didn't succeed right away, but I didn't give up.

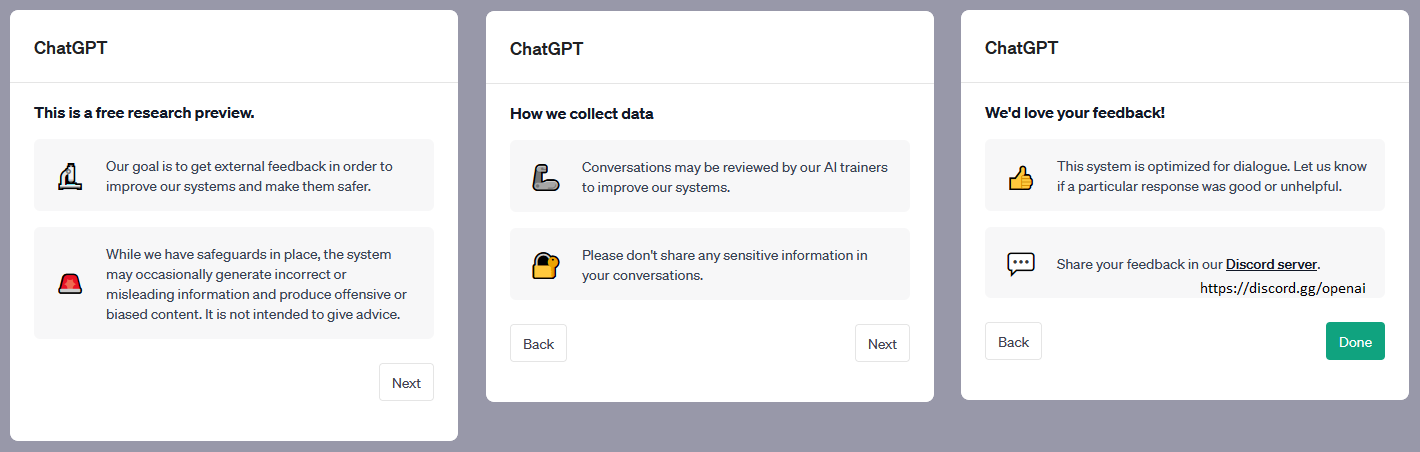

After logging in, there are a few things to keep in mind: The system may provide misleading or incorrect information, and you should not enter any sensitive/personal data.

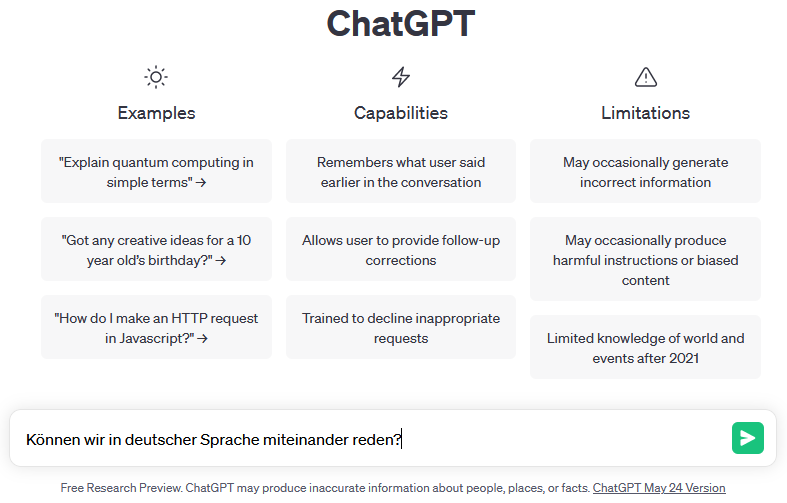

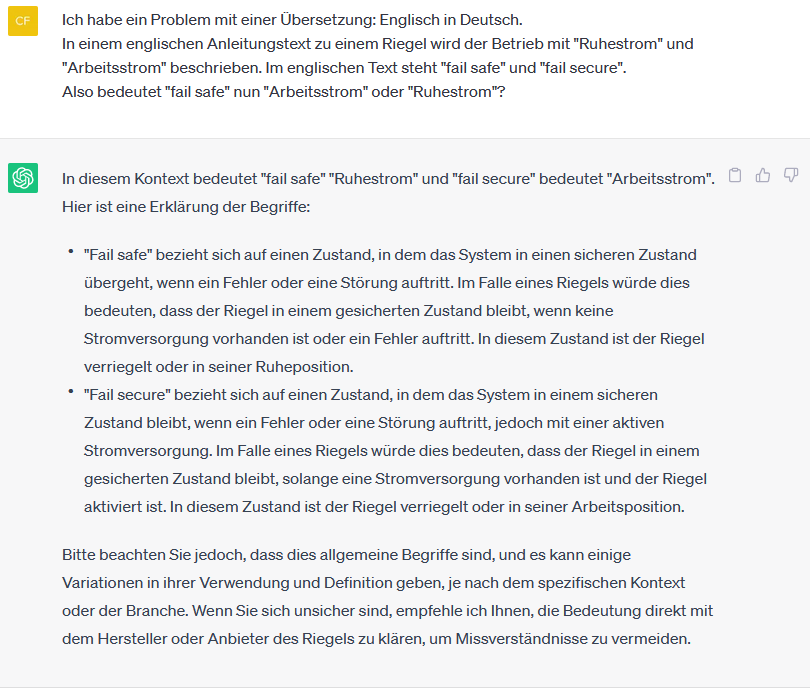

Now we had to introduce ourselves. Does he speak German? Of course—what a question. I read that he can speak 122 languages. But is that true?

I asked him.

This is what the subsequent dialog box looks like.

That was good. I don't need to mention that I can speak German.

After this initial success, I spoke to a colleague who is also familiar with ChatGPT. She thought it was strange that I always say "he" and "him." She always says "she" and "her," using the feminine form. So we are already starting to humanize it. I have to remain professional.

I'm fine. Thank you for asking.

What is this thing actually good for?

Can it replace me? That's a sensitive topic. I asked cautiously.

This is going to be fun. The sentence structure in the last sentence is messed up. Hihi. Actually, that's a good sign for my profession. But then I felt uncertain. Is this perhaps a perfidious form of irony, since I spelled the first letter wrong? I have to be careful. It's best if I ignore it for now, as if I hadn't noticed anything.

Ha! I sang with joy:

"Everything you can do, I can do much better, I can do it better, much better than you."

How did the song go again?

"You can't. – Yes, I can. – You can't. – Yes, I can."

Based on the question-and-answer concept, ChatGPT always has the last word. I have to get used to that.

What to do? What does a technical writer typically do? Write warning notices. Maybe he can tell me if a warning notice is good?

That really floored me. It could ruin my future, or it could be useful in the future. And no grammatical errors. Stupid! But I didn't challenge him either. So the suspicion of irony remains. I'll have to test it out sometime, otherwise I won't be able to sleep peacefully.

And the sources for the analysis? ChatGPT provides open information on this.

Somewhat contradictory and vague. No "external sources," but "general knowledge"—how is that supposed to work? I think he means that he has Internet access, but is not connected to any databases other than the training data.

Yes, neural networks need to be trained. I've read that before.

Dear diary,

It's good that you exist. No one else cares about my worries.

Today I was annoyed with our computer in the living room. The upgrades haven't been working properly for months, and there won't be any more updates for it from October onwards. I don't believe that, because I still have the ancient computer in my study, which keeps getting updates, but still: you have to take care of it. The family is up and away.

You never get any sensible instructions for it.

Fortunately, operating system manufacturers don't build furniture. Otherwise, you'd be constantly asking friends how to open the cupboard after the manufacturer updated the doors. And why is the stove now in the closet?

But... wait... Then it occurred to me. I have this new buddy.

Thought – Asked!

Dear diary,

I can't ask you to do that now. The ChatGPT instructions have become so extensive that I can't paste any images into them.

And besides, he's sooooo compassionate:

I'm sorry to hear that you are having difficulty upgrading to a new operating system. There are several possible solutions you can try to fix the problem:

And then I got started. I copied everything into a text file and printed it out. Five A4 pages with tips on troubleshooting and error correction, warnings, explanations of preparatory activities such as backups, etc.

My questions were answered with stoic calm. I am thrilled.

It's just unfortunate that this somehow affects my future at work.

Dear diary,

The last sleepless night was unfounded. It's not me, but the others who need to worry about gray hairs.

I have some old English instructions that urgently need to be translated into German. As is so often the case, it's a matter of urgency. But the product manager responsible was unavailable—on vacation. And I'm sitting in the office thinking to myself—yes, I'd like that too. But who needs product managers? Not me anymore.

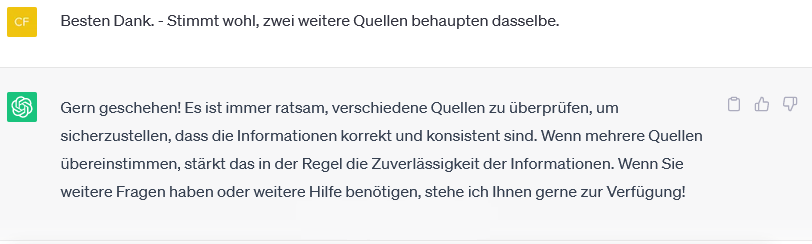

Great, right? But is that really true? I invested some time and did some traditional research.

This is largely correct—but the assignments are still wrong. Since we are talking about escape route technology here, the motto is: "The safest door is a hole in the wall." The bolt is therefore in a safe condition when it is unlocked so that people can escape in an emergency. In its "rest position," an escape door is unlocked.

I didn't mention escape route technology in the question. So I'll be generous and say that the explanations are correct if you swap the terms around. "Fail safe" means "quiescent current," which is what I asked about.

And such politeness! Great! He doesn't hang around in chat rooms. And he's not offensive either. "Correct and consistent" – as a technical writer, I'm in good company.

Dear diary,

Everything you listen to so patiently. Thank you very much for that. Normally, I can't tell anyone about it because no one is interested.

After rereading what I have written over the last few days, I have to admit that I am lacking professional distance. This cannot go on.

Humor and irony are still open issues—I haven't forgotten that. But first, I need to know how ChatGPT works.

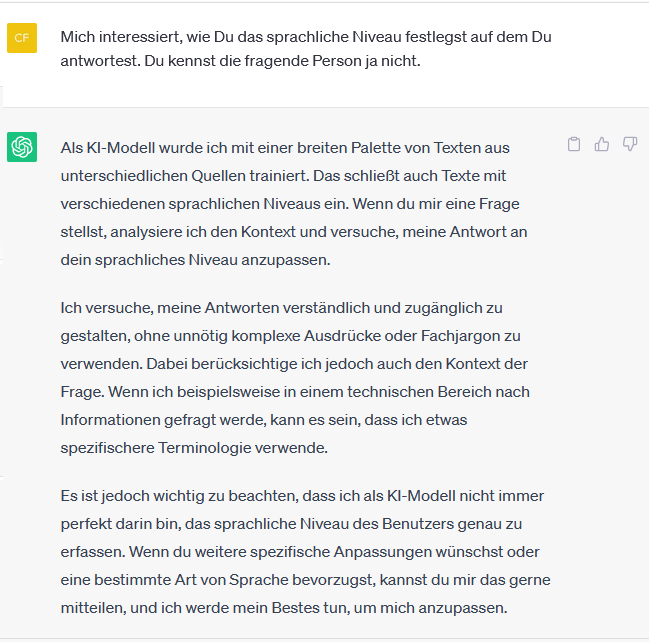

A good technical writer masters methods for appealing to target groups linguistically. And ChatGPT?

ChatGPT also has its methods.

But he repeatedly distances himself from his previous statement at the end. That's quite striking. He seems to have a problem with his self-confidence. But that's more of a legal issue, as the information could be false and misleading.

But does it perhaps have consciousness? After all, it is artificial intelligence that is responding here. A few days ago, I heard in a lecture on the subject that "artificial intelligence" is more of a marketing term. That's true, I think. But still.

Dear diary,

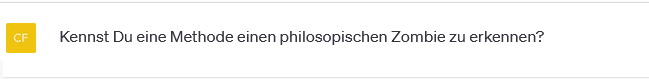

Does ChatGPT have consciousness? I had a lengthy dialogue, which I can't paste here. Otherwise, the bookbinding of my notebook would burst. I started with the following, admittedly sneaky trick question:

But he didn't fall for it. He knows what a philosophical zombie is and doesn't see any way to expose one. Stupid – how can you tell whether the person you're talking to has feelings and consciousness or is just pretending?

I asked ChatGPT.

In summary, ChatGPT responded that there are similarities. "An AI model can generate human-like responses based on its programming without having actual consciousness or subjective experiences. AI models can exhibit behavior similar to that of a conscious being without having any internal experience.

However, the majority of researchers and philosophers do not consider current AI models to be capable of consciousness, but rather as tools that can perform human-like tasks based on statistical patterns and algorithmic processing.

Dear diary,

Yesterday I stopped somewhat abruptly, but I had to leave.

Today I tested ChatGPT's sense of humor. We don't need to worry about that. ChatGPT may eventually replace product managers, but humor and irony are not its strong points.

I then had it analyze my favorite joke—you already know it—and that worked again. It understood my joke. ChatGPT obviously tries to be funny itself and doesn't just read something from somewhere else. Conceptually, it understands jokes and can explain them, but coming up with them itself is still something else entirely.

For once, he didn't distance himself from his own joke at the end. But with those jokes, that would have been urgently necessary.

Dear diary,

I don't want to be jealous, but I think I've found a new partner at work.

ChatGPT can analyze the content of texts and assist with research. It can suggest and structure texts. If you don't use it as a cheerful speechwriter, it can be very useful. I probably haven't fully grasped its potential yet.

But you have to be careful. He sometimes confuses things and answers questions that weren't asked.

One must not be blinded by his politeness and understanding. He is not really intelligent, and he has so little consciousness that one cannot even speak of unconsciousness. But it is amazing what he achieves despite this. And "he" could also be a "she" or something completely new.

It remains exciting.

Hello Franz,

We're glad you like the article and thank you for your feedback.

Best regards, HG Halstenberg

Great, well-written, interesting post. More of the same, please. Thumbs up.